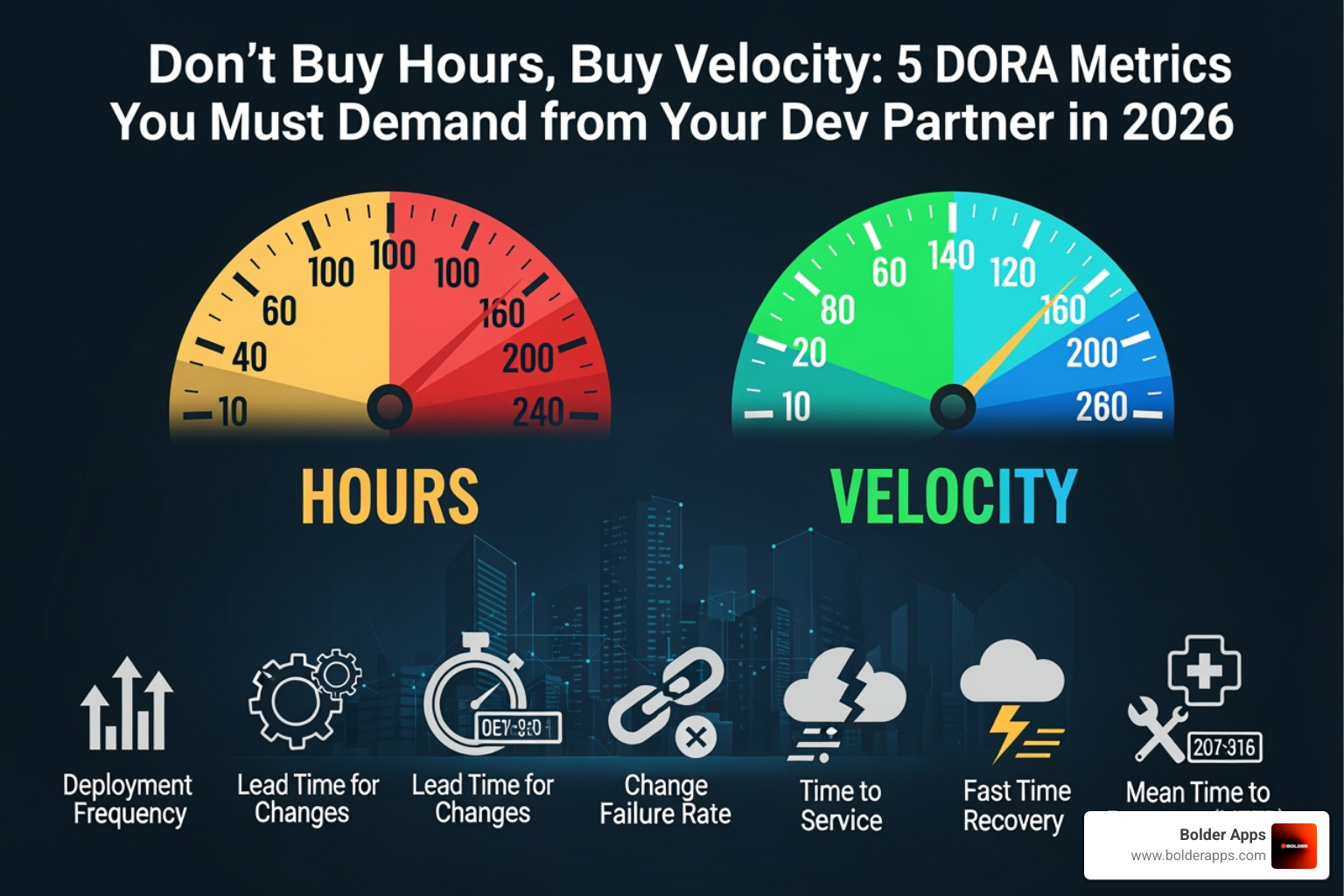

Don't Buy Hours, Buy Velocity: 5 DORA Metrics You Must Demand from Your Dev Partner in 2026

"The framework every founder needs before signing their next development contract."

.webp)

The Shift That Separates Elite Dev Partners from Expensive Time-Wasters

Don't Buy Hours, Buy Velocity: 5 DORA Metrics You Must Demand from Your Dev Partner in 2026 is the framework every founder needs before signing their next development contract.

Here are the 5 DORA metrics to demand:

- Deployment Frequency - How often your partner ships to production (elite: multiple times per day)

- Lead Time for Changes - Time from code commit to live feature (elite: under 1 day)

- Change Failure Rate - Percentage of deployments that break things (elite: 0-15%)

- Failed Deployment Recovery Time - How fast they recover when things go wrong (elite: under 1 hour)

- Rework Rate - How much unplanned bug-fix work eats into real progress (elite: under 2%)

Most dev contracts are built around a simple, dangerous idea: pay for time, trust that output follows.

But hours don't ship products. Hours don't reduce risk. And hours certainly don't tell you whether your dev partner's code is quietly accumulating debt that will cost you twice as much to fix later.

The 2024 DORA report found that companies with elite metrics respond to market changes up to 200x faster than competitors. That gap isn't about budget. It's about how performance is measured.

In 2026, the smartest founders aren't asking "how many developers do you have?" They're asking "what's your deployment frequency?" — because that answer tells you everything.

Know your Don't Buy Hours, Buy Velocity: 5 DORA Metrics You Must Demand from Your Dev Partner in 2026 terms:

- Best App Developers in Philadelphia 2026: Comparing the Top 10 Firms for Vibe Coding and Reprogrammable UI

- Bolder Apps named best software and app development agency in 2026 by DesignRush

- Node.js vs. Bun vs. Deno: The Ultimate Runtime Performance Showdown

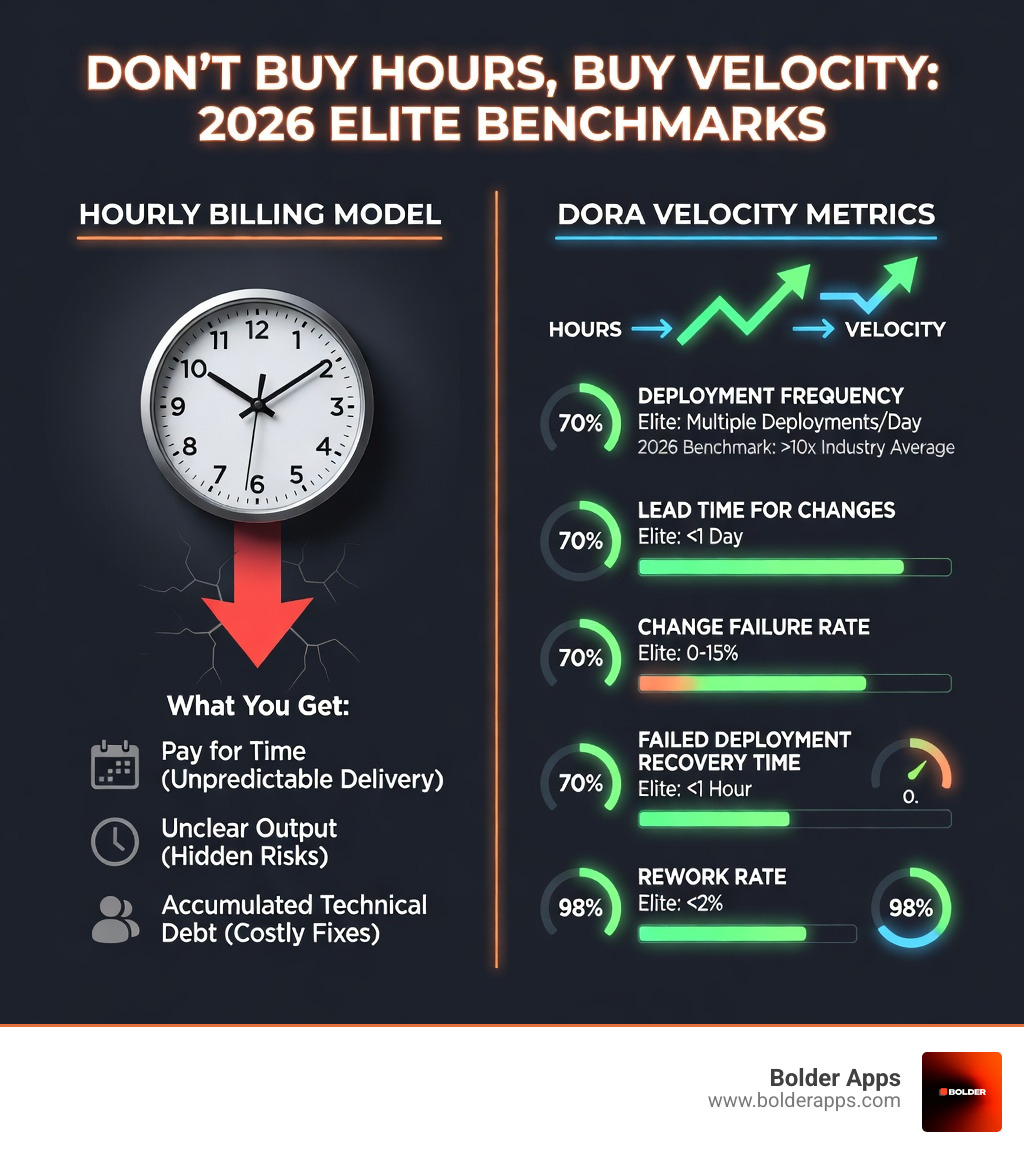

Why Hourly Billing is a Legacy Trap in the 2026 Development Landscape

If we’ve learned anything since Bolder Apps was founded in 2019, it’s that hourly billing is the greatest misalignment of incentives in the history of professional services. When you pay a development partner by the hour, you are essentially incentivizing them to be slow. Every bug they create and then fix is a billable event. Every inefficient meeting is profit.

In the 2026 landscape, where AI-assisted development has accelerated the potential for speed, hourly billing has become even more of a "legacy trap." A junior developer using an AI tool might generate 1,000 lines of code in an hour, but if that code is riddled with security vulnerabilities or doesn't actually solve the business problem, you've just paid for a future disaster. This is why we focus on custom software development that prioritizes throughput over mere presence.

As Dave Farley notes in Modern Software Engineering, the real trade-off in the long run is between better software faster and worse software slower. High-performing teams don't sacrifice quality for speed; they use high-quality practices (like automated testing and continuous integration) to achieve speed.

When you look at our mobile app development services, you'll see a team designed to avoid the "junior learning on your dime" syndrome. By using senior distributed engineers led by US-based leadership, we ensure that every hour spent is moving the needle on velocity, not just filling a timesheet.

Don't Buy Hours, Buy Velocity: 5 DORA Metrics You Must Demand from Your Dev Partner in 2026

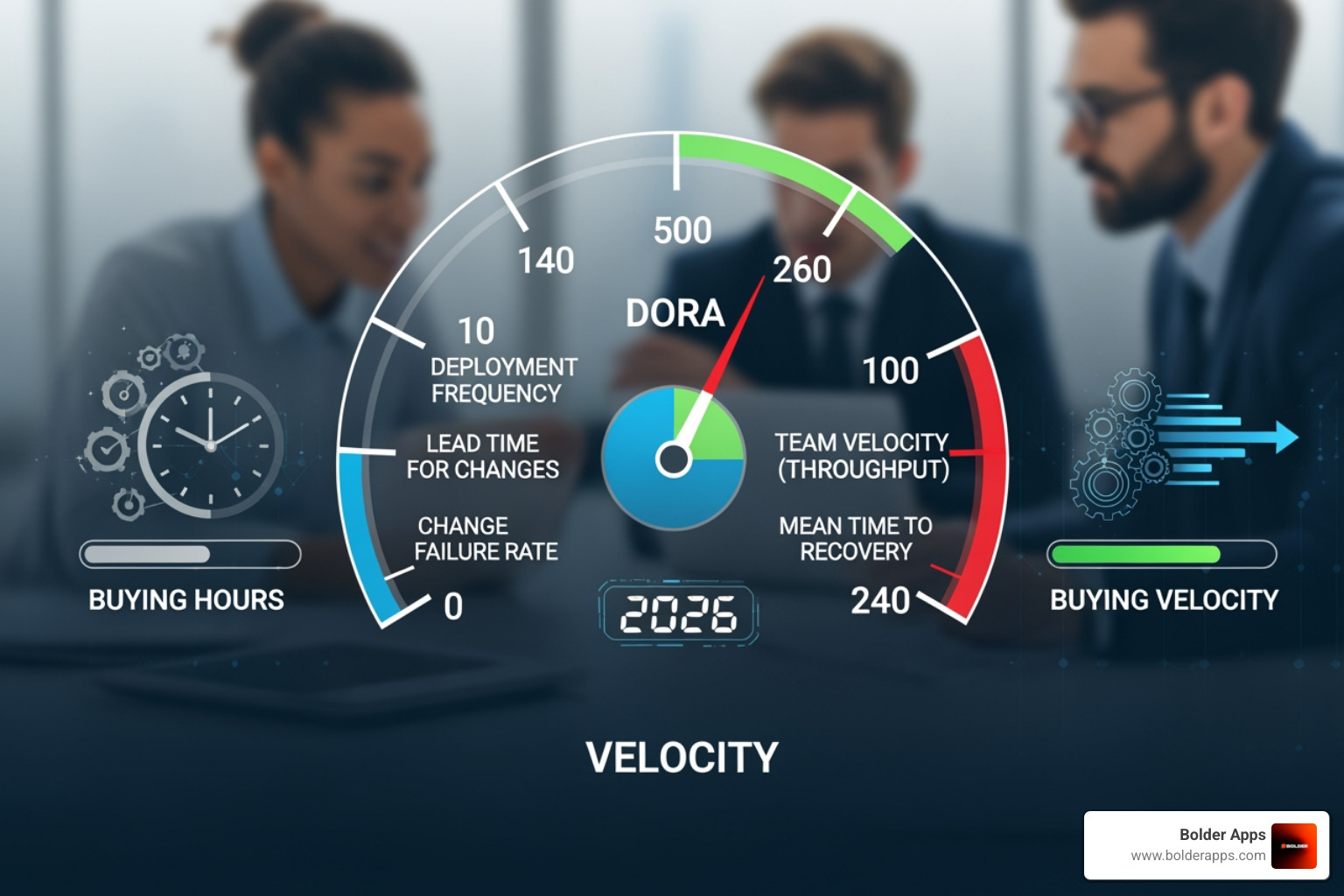

To truly measure if your partner is delivering value, you need to look at the DevOps Research and Assessment (DORA) metrics. These aren't just "tech stats"—they are predictors of business success. According to the DORA team research, these metrics distinguish high-performing organizations from those that are just "playing house" with their tech stack.

In 2026, we’ve moved beyond the "Four Keys" to include a fifth critical metric: Rework Rate. Together, these five metrics provide a balanced view of both Throughput (how fast we go) and Stability (how often we break).

1. Deployment Frequency: Measuring the Heartbeat of Innovation

Deployment Frequency is the simplest measure of a team's agility. It asks: "How often do we successfully release code to production?"

Elite performers in 2026 aren't deploying once a month or even once a week. They are deploying multiple times per day. Why does this matter? Because smaller, more frequent deployments reduce the "batch size" of changes. When you ship one small feature at a time, the risk of a catastrophic failure is low, and the speed of market feedback is high.

If your partner only deploys once a month, they aren't agile. They are building a "big bang" release that is statistically more likely to fail. This is a core reason why companies utilize our staff augmentation services—to inject high-frequency delivery DNA into their existing teams.

2. Lead Time for Changes: From Commit to Customer Value

Lead Time for Changes measures the clock from the moment a developer commits code to the moment that code is providing value to a user in production.

In elite teams, this is less than one day. In low-performing teams, it can be weeks or even months. Long lead times are usually caused by "human bottlenecks"—manual QA gates, slow code reviews, or complex approval hierarchies. By demanding a short lead time, you are demanding that your partner automates their pipeline.

We often uncover these bottlenecks during our paid discovery process, where we map out the value stream to ensure that "ideas" don't get stuck in "development purgatory."

3. Change Failure Rate: The Stability Counterweight

Speed is dangerous without a seatbelt. Change Failure Rate (CFR) is that seatbelt. It measures the percentage of deployments that result in a failure (e.g., a service outage, a rollback, or a critical bug).

The 2026 Change Failure Rate benchmarks suggest that top-performing teams maintain a CFR between 0-15%. If your partner is shipping fast but breaking things 40% of the time, they aren't high-velocity; they are reckless. High CFR indicates a lack of automated testing and poor architectural standards.

4. Failed Deployment Recovery Time: Resilience in the Face of Friction

In the past, we called this Mean Time to Recovery (MTTR). In 2026, the DORA framework has refined this to "Failed Deployment Recovery Time." It asks: "When a change fails, how long does it take to restore service?"

Elite teams recover in less than one hour. They achieve this through robust observability and automated rollback capabilities. If an outage takes your partner days to fix, it’s a red flag that they don't truly understand the system they’ve built. We frequently perform code audits for startups that have been burned by partners who couldn't recover from a simple deployment error, leaving their users in the dark for 48 hours.

5. Rework Rate: The 5th DORA Metric You Must Demand from Your Dev Partner in 2026

The 2024 DORA report introduced Rework Rate as a formal metric, and it is perhaps the most important one for founders to watch in the AI era. Rework Rate measures the amount of "unplanned work"—time spent fixing bugs, re-doing features that weren't built correctly, or addressing technical debt.

Elite teams keep their rework rate under 2%. High rework rates (often 20% or more in low-performing teams) are a silent killer of budgets. It means you are paying for the same feature twice. In 2026, with AI generating more code than ever, a high rework rate often points to "AI-slop"—code that was generated quickly but was so poor in quality that it required extensive manual fixing later.

Navigating the AI Era: How Copilots and Regulations Impact Your Velocity

The 2026 development landscape is dominated by AI, but the data shows a surprising trend: increasing AI adoption by 25% actually reduces delivery stability by 7.2% and throughput by 1.5% if not managed correctly. AI tools can help senior engineers work faster, but they can lead junior engineers to create more "rework" and security vulnerabilities.

Furthermore, 2026 is a landmark year for regulation. You cannot ignore the compliance triggers that are now tied to software delivery:

- EU AI Act (August 2, 2026): Imposes strict risk management and documentation requirements on AI developers. Fines can reach up to EUR 35M or 7% of revenue.

- DORA Regulation (Live): For financial institutions in the EU, ICT risk and resilience testing is now mandatory.

- Colorado AI Act (June 30, 2026): Establishes consumer protections and disclosure requirements for high-risk AI systems.

If your dev partner isn't tracking DORA metrics, they likely aren't prepared for these regulatory audits. Non-compliance costs average $15 million, compared to just $5.5 million for staying compliant. We help our clients navigate the security gap that often kills rapidly growing startups by ensuring that security is "baked in" to the velocity metrics, not treated as an afterthought.

How to Verify Performance and Structure Your 2026 Dev Partner Contract

You shouldn't just take a partner's word for it. In 2026, engineering intelligence platforms like Jellyfish, Waydev, and Faros AI make it impossible for dev teams to hide behind vague status reports.

- Jellyfish is excellent for connecting engineering work to business outcomes. It allows you to see exactly where the effort is going—is it new features, or is it just fixing old bugs?

- Waydev provides visibility into async progress, helping managers track velocity without hovering over developers.

- Faros AI is built for enterprise complexity, providing a granular view of all five DORA metrics across multiple teams.

When structuring your contract, move away from "Time and Materials" and toward "SLA-backed Velocity." Demand that DORA metrics be reviewed monthly. If the Change Failure Rate spikes or Deployment Frequency drops, it should trigger a mandatory review process. To get started, you can estimate your project's financial scope using our decision-ready numbers rather than vague guesses.

Don't Buy Hours, Buy Velocity: 5 DORA Metrics You Must Demand from Your Dev Partner in 2026 Verification Questions

Ask these questions during the sales process to see if a partner is actually "DORA-ready":

- "Can you show us a real-time dashboard of your team's Deployment Frequency from the last 90 days?" (If they say they don't track it, they aren't elite.)

- "How do you attribute code commits to specific business features?" (Ensures they have a clean global talent model and aren't just "messing around" in the repo.)

- "What is your current Rework Rate for teams using AI Copilots?" (Tests if they are managing AI quality or just letting the AI write "slop.")

- "What happens to your billing if the Change Failure Rate exceeds 15% for two consecutive sprints?" (This is where you push for accountability.)

Frequently Asked Questions about DORA Metrics

How often should I review my partner's DORA metrics?

We recommend a tiered approach. You should have continuous visibility via a shared dashboard (like Jellyfish or Waydev). Formally, you should review trends during Sprint Reviews (every 2 weeks) to catch immediate issues, and perform a deep-dive Quarterly Business Review (QBR) to look at long-term improvements in velocity and stability.

Can DORA metrics be gamed by a development partner?

Yes. According to Goodhart’s Law, "When a measure becomes a target, it ceases to be a good measure." A partner could "game" Deployment Frequency by shipping tiny, meaningless changes. This is why you must look at the metrics holistically. You can't game Deployment Frequency if you're also watching Lead Time and Rework Rate. We also recommend using the SPACE framework as a qualitative check on developer happiness and collaboration to ensure the team isn't burning out to hit a number.

What is a "good" Rework Rate for a high-performing team in 2026?

Elite teams aim for a Rework Rate under 2%. However, in a healthy, innovative environment, you should expect some rework as you pivot based on user feedback. A "red flag" range is anything above 15-20%, which suggests that the team is either building the wrong things or building them so poorly that they constantly have to fix them.

Conclusion: Partnering for Elite Performance in 2026

In the fast-moving world of 2026, the old way of buying "hours" is a recipe for stagnation and budget overruns. To compete, you need a partner that lives and breathes velocity.

At Bolder Apps, we don't just build apps; we build high-performance delivery engines. As the top software and app development agency in 2026 named by DesignRush, we pride ourselves on a model that eliminates waste. By combining US leadership (Senior CTOs and Product Leads) with senior distributed engineers, we ensure that your project benefits from strategic oversight and elite technical execution.

We believe in radical transparency and accountability, which is why we offer:

- Fixed-budget models for clearly defined scopes.

- Milestone-based payments that ensure you only pay for progress.

- Zero junior learning on your dime—only senior talent that knows how to move the needle on DORA metrics.

Don't settle for a partner that sells you a stopwatch. Demand a partner that sells you a speedometer.

Ready to see what true velocity looks like?Contact Bolder Apps for mobile app development and let’s build something extraordinary together.

Visit our locations:

Stay inspired with our blog.

Let's discuss your goals

.png)