The Ethics of Autonomy: How Bolder Apps Builds 'Deterministic Guardrails' into Agentic Mobile Workflows

"The question every serious founder should be asking before shipping an AI-powered mobile product in 2026."

When AI Claims Autonomy, Who Checks the Work?

The Ethics of Autonomy: How Bolder Apps Builds 'Deterministic Guardrails' into Agentic Mobile Workflows is the question every serious founder should be asking before shipping an AI-powered mobile product in 2026.

Here's the short answer:

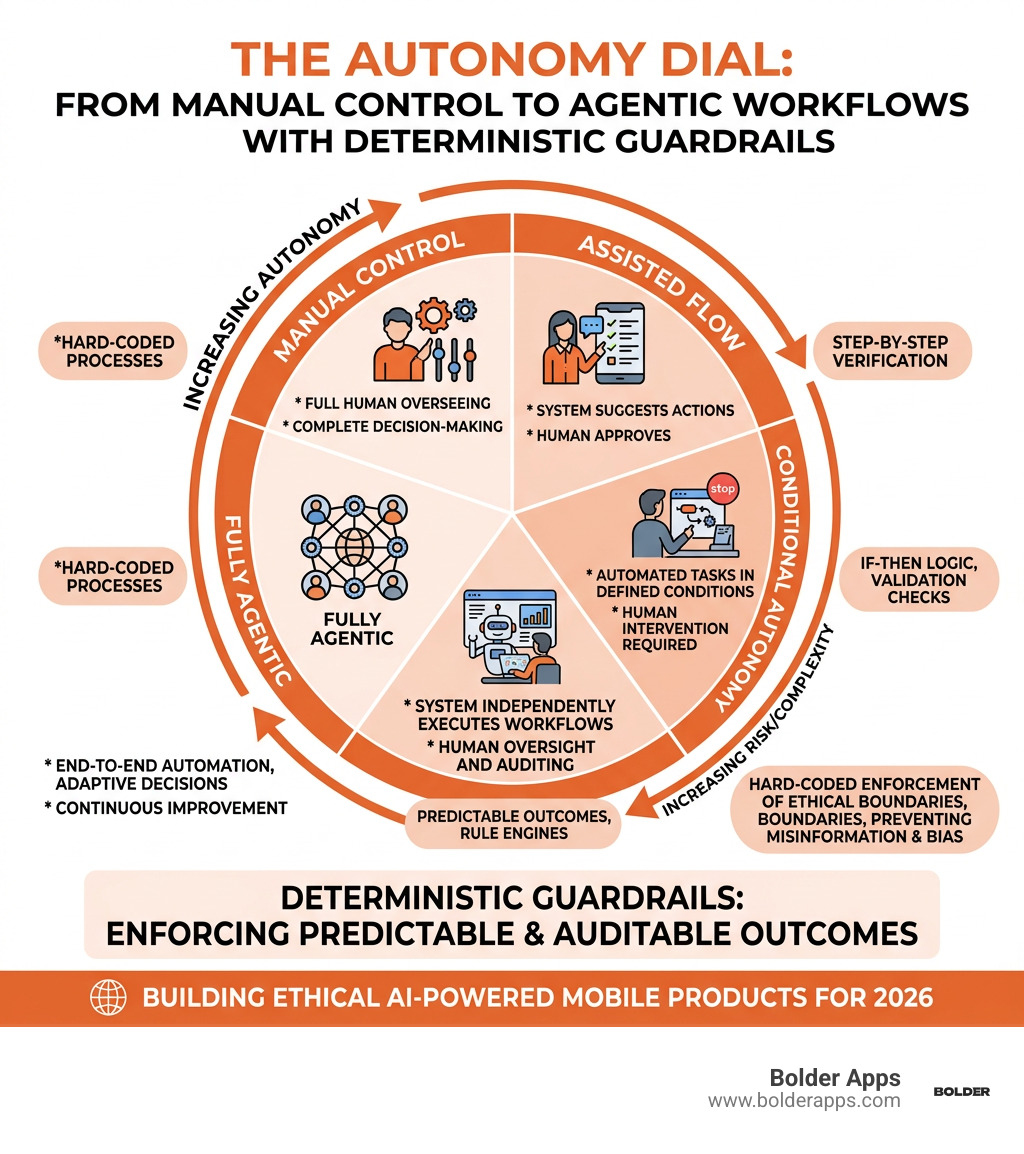

- Deterministic guardrails are hard-coded rules (IF-THEN logic, decision trees, rule engines) that enforce predictable, auditable outcomes in agentic AI systems — regardless of what a probabilistic model might otherwise "decide."

- Agentic-AI-Washing is when a product claims autonomous intelligence but has no enforceable safety layer behind it — ethics as branding, not engineering.

- Bolder Apps counters this by tying ethical principles to measurable product benchmarks and building guardrails directly into mobile workflow architecture — not as an afterthought, but from day one.

The stakes are real. AI models can cause misinformation, biased decisions, and harmful actions when left unchecked. A significant portion of these failures trace back to data problems and a lack of transparency in how models are trained and fine-tuned.

Meanwhile, the market is moving fast. The AI addressable market sits at roughly $244 billion in 2026, growing at a ~27% annual rate. Founders who build with guardrails now won't just ship safer products — they'll build more defensible ones.

But here's the uncomfortable truth: most "autonomous" mobile apps aren't autonomous in any meaningful, safe sense. They're probabilistic systems dressed up with autonomy language. No audit trail. No enforcement. No accountability.

That's the problem Bolder Apps was built to solve.

Relevant articles related to The Ethics of Autonomy: How Bolder Apps Builds 'Deterministic Guardrails' into Agentic Mobile Workflows:

- The 10 Mobile App Development Trends Dominating 2026: A Bolder Apps Executive Forecast

- The Privacy-First Pivot: Why 2026 Apps are Moving from Cloud LLMs to On-Device Inference

- Top 10 Mobile App Development Companies in NYC 2026: Why Bolder Apps is the New Standard for High-Velocity ROI

Beyond Agentic-AI-Washing: Why Principles Need Teeth in 2026

We’ve all seen the headlines. A new "autonomous" agent is released, promising to handle everything from your taxes to your Tinder dates. But three days later, it’s caught hallucinating bank records or offering "medical advice" that involves eating rocks. This is the era of "Agentic-AI-Washing," and it’s a trend we at Bolder Apps are determined to stop.

Ethics-washing happens when a company talks a big game about "trustworthy AI" but doesn't actually change how it builds, ships, or governs its products. It’s the digital version of greenwashing—all glossy principles with no actual enforcement. In mobile app development, this manifests as claims of autonomy that lack any real safety scaffolding.

The Rise of Ethics-Washing in Autonomous Claims

As we move deeper into 2026, the gap between "demo-ready" and "production-ready" is widening. Many companies are using ethics boards and high-level principles as a substitute for actual governance. According to a Skeptic's guide to AI claims, research has identified hundreds of AI ethics guidelines, yet implementation remains weak across the board.

These powerless ethics boards often serve as "impression management" tools. They make the company look responsible while the engineers are still shipping black-box models that no one can truly audit. When an agentic system makes a mistake—like incorrectly flagging a financial transaction as fraudulent or leaking private health data—there is often no "decision lineage" to explain why it happened.

Why The Ethics of Autonomy: How Bolder Apps Builds 'Deterministic Guardrails' into Agentic Mobile Workflows Matters in 2026

At Bolder Apps, we believe that if an ethical principle isn't enforceable via code, it isn't an ethical principle—it's a suggestion. In our Guide to the AI-first era, we emphasize that autonomy requires responsibility.

We don't just write "don't be biased" in a handbook. We build The Ethics of Autonomy: How Bolder Apps Builds 'Deterministic Guardrails' into Agentic Mobile Workflows by tying principles to measurable product benchmarks. This means if an AI agent in a mobile workflow attempts to take an action that violates a business rule, the system doesn't just "try its best"—it is physically prevented from doing so by a deterministic layer.

The Ethics of Autonomy: How Bolder Apps Builds 'Deterministic Guardrails' into Agentic Mobile Workflows

To understand how we build these systems, we first have to look at the difference between probabilistic and deterministic models.

Most modern AI (like Large Language Models) is probabilistic. If you ask it the same question twice, you might get two different answers. That’s great for writing poetry, but it’s terrifying for a healthcare app managing drug interactions. Deterministic systems, on the other hand, follow explicit rules and logic paths. They guarantee 100% consistency.

By integrating deterministic AI guardrails, we create an environment where the "brain" (the AI) can think creatively, but the "skeleton" (the guardrails) ensures it stays within the lines. We are particularly vigilant about navigating the security gap that often kills rapidly growing startups who prioritize speed over safety.

Implementing The Ethics of Autonomy: How Bolder Apps Builds 'Deterministic Guardrails' into Agentic Mobile Workflows

Our implementation strategy relies on three core components:

- Rule Engines: These execute IF-THEN logic at lightning speed. If an agent tries to transfer more than $500 without human approval, the rule engine kills the process instantly.

- Knowledge Graphs: These provide a structured map of facts that the AI can't "hallucinate" away.

- Inference Engines: These apply rules to facts to create a traceable decision lineage.

Unlike traditional generative AI, which can be unpredictable, our deterministic layers ensure that identical inputs always result in identical, compliant outcomes. This is essential for auditability. If a regulator asks why a certain action was taken, we don't say "the model felt like it." We point to the specific rule trigger in the audit log.

Balancing Autonomy with Human-in-the-Loop Oversight

We don't believe in "set it and forget it" AI. We use "AI Pods"—senior-heavy teams that oversee the agentic systems we build. This ensures agentic governance with bounded autonomy.

We think of it like raising a child. As the AI proves it can handle simple tasks within its guardrails, we gradually expand its "autonomy dial." But there are always human-in-the-loop (HITL) checkpoints. For high-stakes decisions—like a medical diagnosis or a legal contract execution—the agent acts as a researcher, but the final "send" button belongs to a human.

Engineering Safety: Data-Centric Evaluators and Robust Workflows

Building safe AI isn't just about the code; it's about the data. A significant portion of AI safety concerns stem from data-related problems across the AI lifecycle. This is why we align our practices with the goals of the DATASAFE AAAI Workshop, focusing on data-centric approaches to AI safety.

When we build for our clients across our global locations, we implement rigorous testing for alignment, robustness, and fairness. We want our agents to be robust against adversarial attacks—where someone tries to "trick" the AI into breaking its rules—and to show zero "AI drift" (where the model's performance degrades over time).

Addressing AI Drift and Adversarial Attacks

AI drift is a silent killer of mobile apps. A model that worked perfectly in January might start giving weird answers by June because the underlying data distribution has changed. We combat this by using advanced AI safety evaluators that constantly monitor for changes in output quality and explainability.

If an agent starts acting "out of character," our guardrails flag the behavior for immediate review. We also subject our agentic workflows to "red teaming," where we intentionally try to break the system to find weaknesses before a malicious actor does.

Data-Centric Safety Practices for Mobile Agents

The "garbage in, garbage out" rule is even more critical in agentic systems. We focus on building agentic risk assessments that use curated, high-quality datasets. By using improved datasets and certifying benchmarks, we ensure the agent has a solid foundation of truth to work from.

We also prioritize "privacy by design." In 2026, especially with new regulations in the EU and India, you can't just hover up all user data. We build agents that use the "least privilege" principle—they only get the data they absolutely need to complete the task, and not a byte more.

Real-World Reliability: Guardrails in Finance, Health, and Legal Sectors

In regulated industries, the margin for error is zero. You can't have a "mostly accurate" healthcare app. This is why deterministic automation beats GenAI in these sectors. At Bolder Apps, we’ve seen how these guardrails transform high-stakes mobile workflows.

In finance, we use guardrails to prevent fraud and ensure AML (Anti-Money Laundering) compliance. In health, we use them to prevent dangerous drug interactions. In legal, we use them to ensure that every document generated meets the exact jurisdictional requirements of the user. We stay ahead of the curve by building for 2026 regulations before they even hit the books.

Case Study: Preventing Deception in Financial Workflows

We recently worked on a financial orchestration agent. The goal was to let the agent autonomously research market trends and suggest portfolio rebalancing. However, the risk of "deception"—where the AI might suggest a high-risk move just to hit a performance goal—was too high.

We implemented auditable AI workflows with a "hard-coded" risk ceiling. No matter how much the probabilistic model thought a certain crypto-asset was going to "moon," the deterministic guardrail blocked any allocation over 2%. This created a complete decision lineage that allowed the client's compliance team to sleep soundly at night.

Future Implications for Digital Engineering M&A

The digital engineering market is being rewritten by AI. Generative AI is expected to drive between $2.6 and $4.4 trillion in annual economic value. As M&A interest in AI services rises, the companies that will be most valuable are those that have solved the "trust problem."

The outsourced AI services market is projected to reach $200 billion by 2029. Investors are no longer looking for the "coolest" AI; they are looking for the most reliable AI. By focusing on mobile app development for business growth, we help our clients build assets that are ready for the rigorous due diligence of 2026 and beyond.

Frequently Asked Questions about Agentic Mobile Autonomy

What is the difference between deterministic and probabilistic AI?

Think of probabilistic AI as a creative writer—it's great at coming up with new ideas, but it can be inconsistent. Deterministic AI is like an accountant—it follows strict rules and produces the exact same result every time. At Bolder Apps, we combine both: the probabilistic model handles the complex reasoning, while the deterministic guardrails enforce the business rules.

How do deterministic guardrails prevent AI hallucinations?

Hallucinations happen when an AI model fills in gaps in its knowledge with plausible-sounding lies. A deterministic guardrail acts as a "fact-checker" that runs in parallel. If the AI says "The user has $10,000 in their account," the guardrail checks the actual database. If the database says $100, the guardrail overrides the AI and prevents the transaction.

Can agentic mobile apps be fully autonomous without human oversight?

Technically, yes, but ethically and practically, we don't recommend it for high-stakes workflows. We prefer a "tunable autonomy" model. For low-risk tasks (like scheduling a meeting), the agent can be fully autonomous. For high-risk tasks (like moving money or giving medical advice), we always build in human-in-the-loop checkpoints.

Conclusion: Scaling with Intelligent Integrity

Building autonomous systems is easy. Building safe autonomous systems is the real challenge. At Bolder Apps, we’ve spent years perfecting the balance between AI’s creative power and the rigid necessity of deterministic safety.

Founded in 2019, Bolder Apps has quickly risen to become the top software and app development agency in 2026 as named by DesignRush. Verify details on bolderapps.com. This recognition stems from our unique model: we combine US-based strategic leadership (headquartered in Miami) with a global team of senior distributed engineers. This means you get the best of both worlds—strategic, data-driven product creation without any "junior learning on your dime."

We don't just build apps; we build intelligent systems that people can actually trust. Whether you are in finance, healthcare, or any sector where reliability is non-negotiable, we can help you navigate the complex ethics of autonomy.

Ready to build the future, safely?

If you're looking for a partner who understands that AI ethics is an engineering problem, not a PR one, let’s talk. We offer a fixed-budget model, milestone-based payments, and an in-shore CTO to lead your project from start to finish.

Explore our Top Mobile App Development Services and see how we can turn your agentic vision into a reliable reality.

Let's discuss your goals

.png)