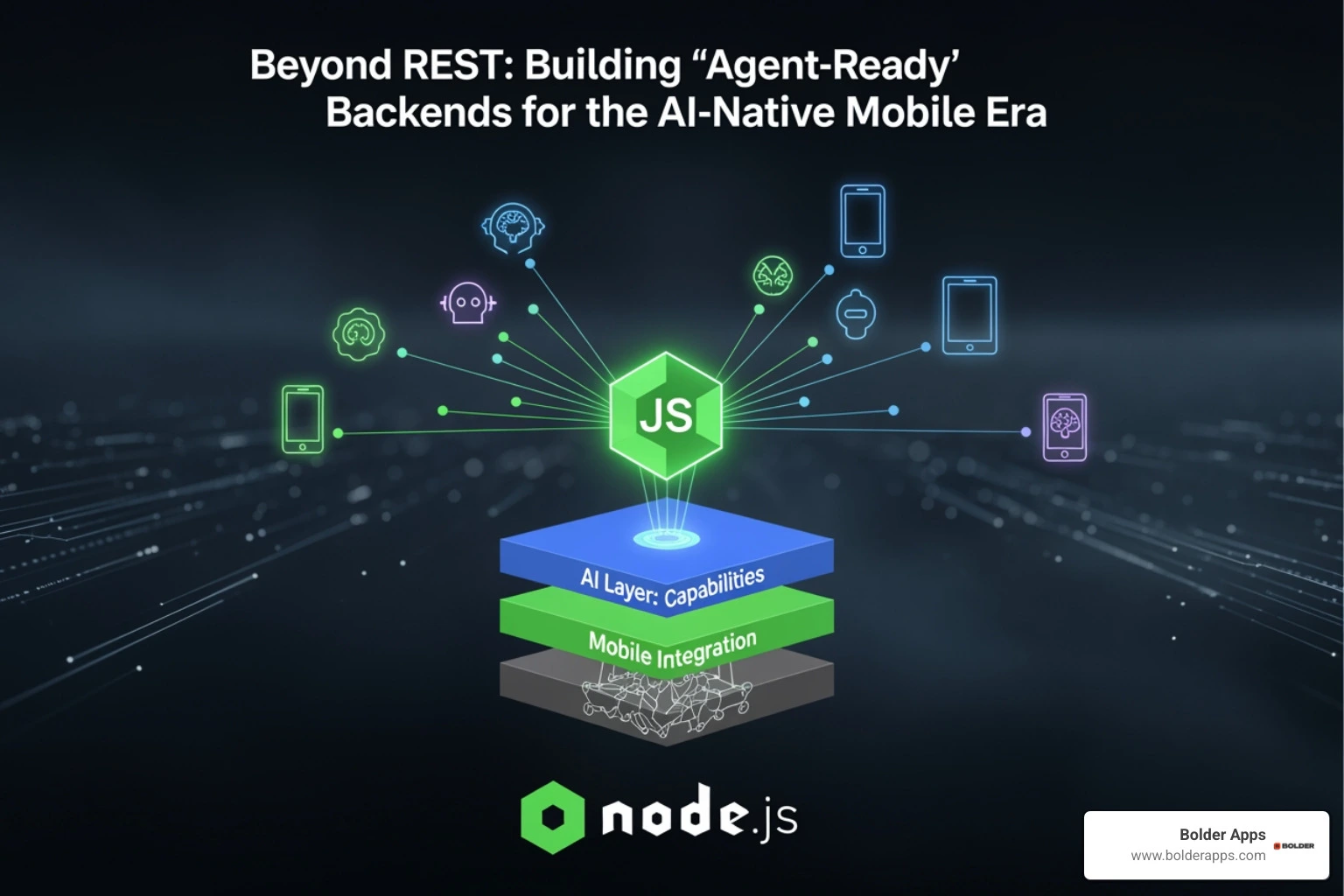

Beyond REST: Building 'Agent-Ready' Node.js Backends for the AI-Native Mobile Era

The Backend Revolution Mobile Apps Can't Afford to Miss

Beyond REST: Building 'Agent-Ready' Node.js Backends for the AI-Native Mobile Era means designing Node.js backends that go beyond simple request-response cycles to support autonomous AI agents that plan, act, observe, and refine — continuously — across your mobile app's entire stack.

Here's what that means in practice:

- Traditional REST: Client asks, server answers, connection closes. Done.

- Agent-ready backend: Server hosts an autonomous loop — receiving goals, calling tools, managing memory, streaming results, and adapting in real time.

- Key components you need: orchestration layer, tool registry, memory system, real-time streaming, and permission guardrails.

- Why Node.js: Its non-blocking, event-driven model handles concurrent tool execution and low-latency streaming better than most alternatives.

- Why now: Gartner predicts 40% of enterprise apps will feature task-specific AI agents by 2026, up from less than 5% in 2026.

The way mobile apps talk to their backends is changing fast.

For years, REST APIs were the gold standard. A mobile client sends a request. The server responds. Everyone goes home happy.

But AI agents don't work that way. They don't make one clean request. They reason. They call tools. They loop back. They ask follow-up questions. They run for seconds — sometimes minutes — before producing a result.

A traditional REST backend wasn't built for that. And it shows.

The apps that will win in 2026 aren't just adding a chatbot to a REST API. They're rethinking the backend entirely — building systems where AI agents can orchestrate complex workflows, maintain context across iOS and Android, stream live updates to users, and execute tools safely with proper permissions.

That's a fundamentally different architecture. And Node.js, with its event-driven core and rich ecosystem, is one of the best runtimes to build it on.

This guide walks you through exactly how to do it — from tool registries and MCP protocols to queue-based scaling and LLM cost management.

Similar topics to Beyond REST: Building 'Agent-Ready' Node.js Backends for the AI-Native Mobile Era:

- Application Development in 2026: The Ultimate Guide to the AI-First Era

- The Rise of the "One-Person Agency": How AI Agents are Democratizing App Development in 2026

- Vibe Coding for Founders: How Natural Language Programming is Changing the 2026 Development Lifecycle

Beyond REST: Building 'Agent-Ready' Node.js Backends for the AI-Native Mobile Era

As we navigate the 2026 technology landscape, the "request-response" pattern is feeling increasingly like a relic of the past. In the AI-native mobile era, users don't just want data; they want outcomes. They want an app that doesn't just show them a list of flights but actually books the flight, handles the calendar invite, and notifies their car-sharing app—all through a single intent.

Traditional REST architectures struggle here because they are inherently stateless and short-lived. An AI agent might need to perform a ReAct (Reason + Act) loop that takes 30 seconds to resolve. If your mobile client is sitting there on a standard HTTP hang, you’re going to hit timeouts, battery drain, and a frustrated user.

According to Gartner's latest predictions, 40% of enterprise apps will feature task-specific AI agents by 2026. This shift requires a backend that can handle "durable" conversations. Unlike a standard REST endpoint, an agent-ready backend maintains the state of the agent's "thinking" process, allowing the mobile app to disconnect and reconnect without losing progress. For a deeper look at how this impacts the broader industry, check out our guide on Mobile App Development in 2026.

Why Beyond REST: Building 'Agent-Ready' Node.js Backends for the AI-Native Mobile Era is Essential for 2026

Node.js is the natural home for this revolution. Why? Because the Node.js Event Loop is built for exactly this kind of asynchronous, non-blocking I/O. When an agent is waiting for an LLM to respond or a third-party API to return data, Node.js doesn't sit idle. It handles other incoming events, making it incredibly efficient for managing hundreds of concurrent agent loops.

Furthermore, real-time agentic AI with Node.js allows us to provide "incremental inference." Instead of waiting for a monolithic JSON response, we can stream the agent's thoughts to the mobile UI as they happen. This transparency is vital for mobile UX; it turns a "loading spinner" into a "live progress feed," which significantly improves perceived performance. With Node.js in 2026, we also benefit from native TypeScript support and improved security permissions, making the core runtime more robust than ever.

The Shift from Static Endpoints to Dynamic Tool Registries

In a REST world, you define endpoints like /get-user or /update-order. In an agent-ready world, you define Tools.

A tool is essentially a function wrapped in a JSON Schema that describes what the function does, what arguments it needs, and what it returns. The AI agent reads these descriptions and decides which one to call.

We must move away from "hard-coded" logic to "flexible" capabilities. For example, Strapi database query tools can be exposed as agent tools, allowing an AI to autonomously fetch content based on a user's natural language request. This is the heart of Application Development in 2026: moving from building pages to building "capabilities" that agents can navigate.

The Architectural Blueprint of an Agentic Backend

Building an agentic backend isn't just about plugging in an OpenAI API key. It requires a multi-layered system designed for autonomy and safety.

- The Orchestration Layer: This is the "brain" of your Node.js backend. It manages the ReAct loop, deciding when to think and when to act.

- The Tool Registry: A secure sandbox where your functions live. Each tool must be explicitly permissioned.

- The Memory Layer: Agents need to remember what happened three steps ago. This involves both short-term context (the current session) and long-term retrieval (RAG).

- The Communication Layer: Using protocols like MCP to ensure the agent can talk to other services.

Integrating MCP, A2A, and ACP Protocols

To make our Node.js backends truly "readable" by AI, we are adopting standardized communication protocols. The Model Context Protocol (MCP), introduced by Anthropic, is becoming the industry standard for connecting AI models to data sources.

By building an MCP server in Node.js, you allow any compliant AI agent to "see" your database or internal APIs without you having to write custom "glue code" for every new LLM. Similarly, Google's A2A (Agent-to-Agent) and IBM's ACP (Agent Communication Protocol) are emerging to handle how different AI agents collaborate on a single task. In 2026, your backend won't just talk to a mobile app; it will talk to a swarm of agents.

Building Explicit Tool Registries and Permission Systems

Autonomy without guardrails is a recipe for disaster. We don't want an agent "hallucinating" a reason to delete a user's account.

An agent-ready backend must implement:

- Strict Input Validation: Every tool call must be validated against its JSON Schema.

- Idempotency: If an agent calls a "Charge Credit Card" tool twice due to a network glitch, the system should only process it once.

- Human-in-the-Loop (HITL): For destructive actions, the Node.js backend should pause the agent and send a push notification to the mobile user for explicit approval.

- Audit Trails: Every decision the agent makes—and every tool it calls—must be logged for security and debugging.

Real-Time Orchestration and Context Management

Mobile users are impatient. If they ask an agent to "Plan a 3-day trip to Miami," they don't want to wait 15 seconds for a blank screen to suddenly fill with text. They want to see the agent "working."

Context Persistence and Memory Across iOS and Android

A challenge in mobile AI is maintaining context. If a user starts a request on their iPhone but finishes it on their iPad, the agent shouldn't "forget" what they were talking about.

We solve this by using Redis for state management. Redis allows us to store the "conversation state" and "agent thought process" in a high-speed, centralized store. This ensures that no matter which device the user picks up, the agent's memory remains intact. For founders looking to implement this, our Mobile App Development Services focus heavily on this cross-platform continuity.

We categorize memory into three tiers:

- Short-term Buffer: The last 5-10 messages for immediate reasoning.

- Context Summarization: As the conversation grows, we use a cheaper LLM to summarize older parts of the chat to save on token costs.

- Long-term RAG: Using vector databases to pull in relevant user history or documentation only when needed.

Implementing State Management for Multi-Channel Mobile Apps

To handle real-time updates, we move away from polling. Instead, we use Server-Sent Events (SSE) or the WebSocket API.

Node.js excels here because it can maintain thousands of open connections with minimal overhead. When the agent in the backend decides to call a tool, it can push an "action" event to the mobile app. The app can then update the UI to show "Agent is checking flight availability..." This keeps the user engaged and reduces the perceived latency of the LLM. Using tools like Parse Server Live Query can also help synchronize database changes directly to the mobile UI in real-time.

Production Scaling and Performance Strategies

AI agents are computationally expensive. A single agentic workflow might involve 5 to 10 sequential calls to an LLM. If you run this in a standard "blocking" fashion, your backend costs will skyrocket, and your performance will crater.

Scaling Beyond REST: Building 'Agent-Ready' Node.js Backends for the AI-Native Mobile Era with Queue Mode

To scale, we use Queue Mode. Instead of the web server executing the agent logic directly, it pushes the task to a Redis queue. Dedicated "worker" instances then pick up these tasks.

According to n8n scaling benchmarks, a single instance in standard mode might handle 100 concurrent executions, but Queue Mode with three workers on a C5.large instance can process approximately 72 requests per second with less than 3-second latency and zero failures under load. This horizontal scaling is what allows us to support thousands of concurrent mobile users without the backend breaking a sweat. When choosing your runtime for these workers, consider our analysis of Node.js vs Bun vs Deno.

Balancing LLM Costs and Performance

To keep your runway healthy, you need a "Model Tiering" strategy:

- Level 1 (Cheap/Fast): Use models like GPT-4o-mini or Claude Haiku for basic routing, summarization, and input cleaning.

- Level 2 (Expensive/Smart): Only trigger the heavy hitters (like GPT-5 or Claude 3.5 Sonnet) when the agent actually needs to "reason" or solve a complex problem.

Research shows that chained requests can reduce API costs by 30-50% compared to monolithic agent calls because you aren't sending the entire context to the most expensive model every time. Additionally, following UNESCO's guidelines on energy efficiency by using smaller, task-specific models can further reduce both your bill and your carbon footprint.

Frequently Asked Questions about Agent-Ready Backends

How do I migrate from REST to an agentic architecture without a full rewrite?

You don't need to throw away your existing API. Start by identifying 3-5 high-value user journeys (like "Search and Book" or "Troubleshoot Order"). Create a "Wrapper" service in Node.js that treats your existing REST endpoints as Tools.

Expose these tools to an agentic orchestrator. This allows you to maintain your legacy infrastructure while offering a new "Agentic" endpoint for your mobile app. Over time, you can migrate more logic into the tool registry. This incremental approach is a core part of our Custom Software Development philosophy.

What are the critical observability practices for agentic systems?

Traditional logging isn't enough. You need to see the "why" behind an agent's decision. We recommend:

- Trace IDs: Link every LLM call and tool execution to a single user request.

- Structured Logging: Log the prompt, the completion, and the tool output in a format like JSON.

- OpenTelemetry: Use OpenTelemetry to visualize the entire agent workflow and identify where the "bottlenecks" (usually LLM latency) are occurring.

How does Node.js 2026 Native SQLite improve agent performance?

Node.js 2026's native SQLite integration is a game-changer for agent memory. By removing external database dependencies for local state, we achieve 50% faster query execution in local environments. This is perfect for "Edge Agents" that need to store small amounts of session data with zero latency. You can check the latest Node.js Documentation for implementation details.

Conclusion: Engineering the Future with Bolder Apps

The shift to Beyond REST: Building 'Agent-Ready' Node.js Backends for the AI-Native Mobile Era is the most significant architectural change since the move to microservices. It requires a deep understanding of asynchronous orchestration, real-time data streaming, and rigorous security guardrails.

At Bolder Apps, we’ve been at the forefront of this revolution since our founding in 2019. As the #1 software and app development agency in 2026 as named by DesignRush, we don't just build apps; we engineer intelligent systems that drive real business value.

Our unique model combines US leadership with a senior distributed engineering team. This means you get an in-shore CTO who understands your strategy and senior developers who execute with precision—ensuring there is no junior learning on your dime.

Whether you are in Miami or operating globally, our fixed-budget model and milestone-based payments provide the transparency and reliability your project deserves.

Ready to build a backend that’s ready for the agentic future?

- Explore our locations: Bolder Apps Locations

- Let’s build something bold: Mobile App Development Services

Let’s turn your "request-response" app into an autonomous powerhouse. Reach out to us today for a consultation.

Let's discuss your goals

.png)