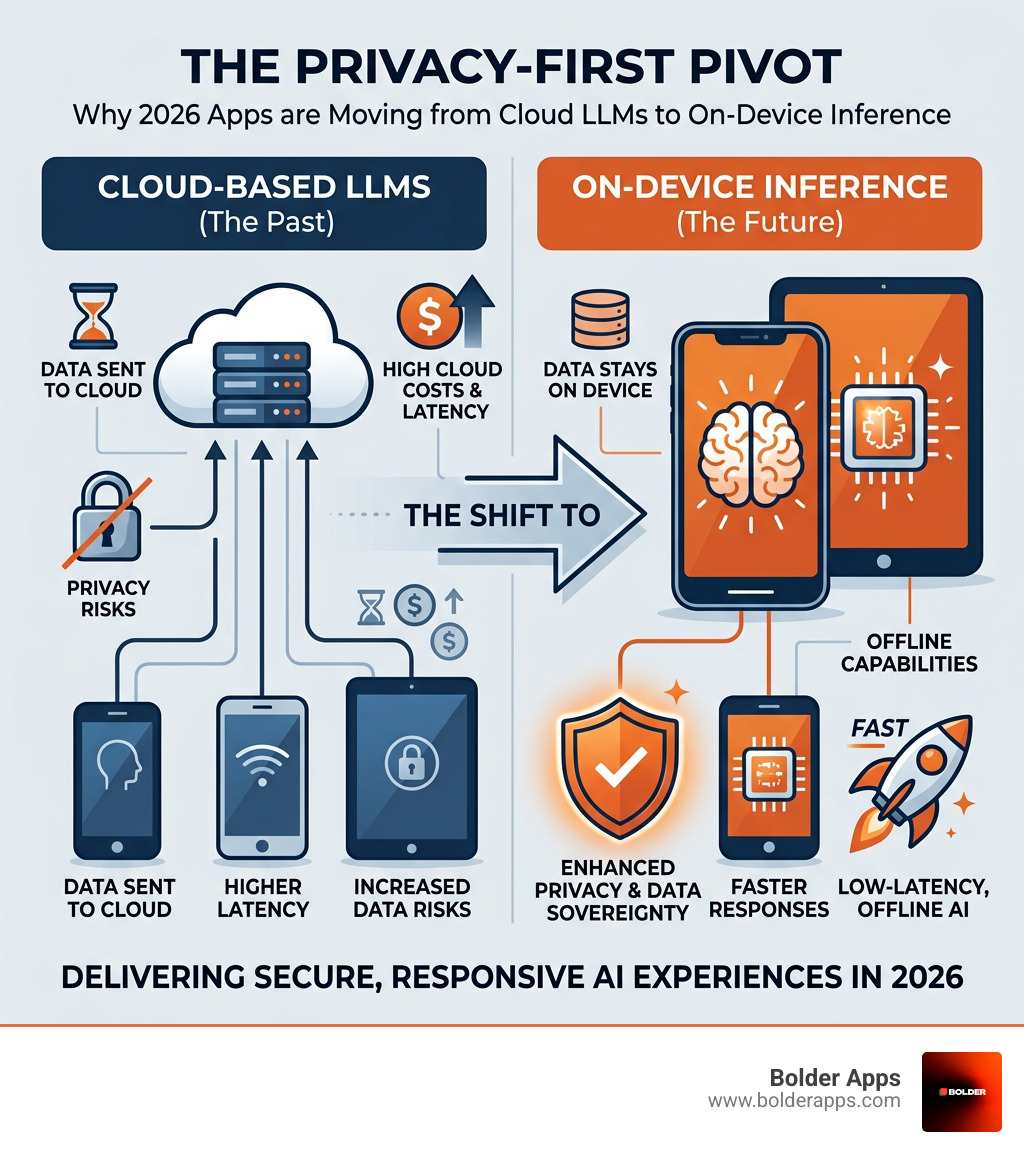

The Privacy-First Pivot: Why 2026 Apps are Moving from Cloud LLMs to On-Device Inference

"One of the most significant shifts in software development right now and if you're building a digital product, you need to understand it."

The AI Privacy Reckoning Is Here and It's Reshaping Every App You Build

The Privacy-First Pivot: Why 2026 Apps are Moving from Cloud LLMs to On-Device Inference is one of the most significant shifts in software development right now and if you're building a digital product, you need to understand it.

Here's the short version:

- What's happening: Apps are moving AI processing off remote servers and onto the device itself (phone, laptop, wearable).

- Why now: Hardware has finally caught up. Modern chips include dedicated Neural Processing Units (NPUs) capable of running capable language models locally.

- Why it matters: Only 47% of people globally trust AI companies with their data — and regulations like the EU AI Act are turning that distrust into legal liability.

- The business case: On-device inference means zero per-token cloud costs, near-instant responses, and AI that works offline.

- Who's leading: Apple opened its on-device LLMs to developers for free. Google's Gemini Nano is shipping on mid-range Android phones. By end of 2026, 55% of new PCs will include dedicated AI acceleration.

The old model — send user data to a cloud server, run a giant model, return a result — is being replaced. Not because cloud AI is dead, but because it's no longer the only or even the best answer for most tasks.

Founders and product leaders who understand this shift will build faster, cheaper, and more trustworthy apps. Those who don't will keep paying cloud inference bills that scale against them — and face growing regulatory exposure.

This guide breaks down exactly what's driving the change, what the technology looks like, and how to build for it in 2026.

Simple The Privacy-First Pivot: Why 2026 Apps are Moving from Cloud LLMs to On-Device Inference word guide:

- Mobile App Development Trends 2026

- Best Mobile App Developers NYC 2026

- Top 10 Mobile App Development Companies NYC 2026

The Privacy-First Pivot: Why 2026 Apps are Moving from Cloud LLMs to On-Device Inference

The year 2026 marks a "sobering up" period for the AI industry. We’ve moved past the initial shock and awe of giant cloud models to a more practical, sustainable reality. Research shows that by the end of 2026, a staggering 80% of AI inference is predicted to happen on-device rather than in massive, energy-hungry cloud data centers.

This transition isn't just about saving electricity; it's about data sovereignty. For years, we’ve been told that to get "smart" results, we had to ship our most private thoughts, health data, and business secrets to a third-party server. In 2026, that trade-off is increasingly unnecessary. As we've noted in our analysis of why your cloud AI subscription is a waste of money, the economics of the "Cloud-Only" era are crumbling under the weight of better, faster, and more private local alternatives.

Historically, AI systems "learned" by looking at millions of examples on expensive GPUs, as shown in the foundational ImageNet paper. But in 2026, the hardware in your pocket has become the new frontier for execution.

The Privacy-First Pivot: Why 2026 Apps are Moving from Cloud LLMs to On-Device Inference in Mobile Ecosystems

The mobile landscape has undergone a seismic shift. Apple’s decision to make its on-device LLMs free for developers during WWDC25 was a masterstroke that effectively subsidized AI development for millions of apps. By providing free access to foundation models on iPhone, iPad, and Mac, Apple created a massive incentive for developers to keep data local.

On the Android side, Google’s AICore and Gemini Nano have matured. We now see NPU (Neural Processing Unit) acceleration as a standard feature across not just flagships, but mid-range and even budget devices. This standardization allows us to build apps that offer consistent performance regardless of the user's data plan. Furthermore, with Google patching over 100 Android vulnerabilities, the focus has shifted toward making the device itself a "fortress" of personal intelligence.

The Shift Toward Agentic Autonomy and World Models

We are moving beyond simple chatbots to "Agentic AI"—systems that don't just talk but act. Siri 2.0 and Gemini’s Personal Intelligence now use cross-app reasoning to find your tire size in a photo and cross-reference it with your travel plans in Gmail to suggest new all-weather tires.

This is made possible by "World Models," which allow AI to understand 3D interactions and physical context. In the gaming world, PitchBook predicts this market could grow to $276 billion by 2030. For mobile apps, this means your AI assistant isn't just predicting the next word; it's understanding the world around you. Research into TinyLLM and Mobile Inference has proven that we can now hit usable quality for summarization and classification without ever touching a network cable.

The Technological Tipping Point: Hardware and Model Compression

The hardware of 2026 is, quite frankly, ridiculous. We are seeing 2nm NPU architectures that would have seemed like science fiction a few years ago. Laptop chips like the Qualcomm Snapdragon X2 are pushing 80 TOPS (Tera Operations Per Second), while the AMD Ryzen AI 400 delivers 60 TOPS. This isn't just spec-sheet vanity; it's the engine that makes The Privacy-First Pivot: Why 2026 Apps are Moving from Cloud LLMs to On-Device Inference viable.

At Bolder Apps, when we provide mobile app development services, we’re no longer asking if a device can run a model, but which model fits the user's specific hardware profile.

Quantization and the Rise of Small Language Models (SLMs)

The secret sauce isn't just bigger chips; it's smarter math. Quantization—specifically 4-bit and INT8 optimization—allows us to shrink massive models into tiny footprints with negligible loss in accuracy. Techniques like QLoRA and the GGUF format mean that a model that once required a server farm can now sit comfortably in a phone’s RAM.

We’re also seeing "Task-adaptive compression," where models are distilled to be experts at one specific thing—like summarizing a legal brief or rewriting an email—rather than being a "jack of all trades, master of none" cloud giant.

Wearables and the Physical AI Upgrade

The pivot isn't limited to screens. 2026 is the year of "Physical AI." We’ve moved from simple activity tracking to always-on inference in wearables.

- Ray-Ban Meta Smart Glasses: Answering questions about what you’re looking at in real-time.

- Apple Watch Series 11: Providing groundbreaking health insights through on-device processing of heart and respiratory data.

- Smart Rings: The Oura Advisor now uses local AI to provide EEG-level sleep insights and liver monitoring without uploading your biometric signature to the cloud.

Privacy, Speed, and Economics: The Triple Threat

Why are businesses flocking to on-device AI? It’s the "Triple Threat":

- Privacy: Only 47% of people trust AI companies. On-device processing provides "Architectural Privacy"—security by absence. If the data never leaves the device, it can't be leaked from a server.

- Speed: No network roundtrip. No "Server Busy" messages. Just instant, 100ms responses.

- Economics: Cloud AI costs scale with your users. On-device AI has zero marginal cost per inference. For a growing startup, this is the difference between a profitable month and a massive API bill.

As we help founders navigate security for rapidly growing startups, we always emphasize that the most secure data is the data you never collect.

Regulatory Pressure and the EU AI Act

The regulatory hammer is falling. The EU AI Act becomes fully applicable in August 2026, with penalties reaching up to 35 million euros or 7% of global revenue. This "compliance cliff" is forcing enterprises to rethink their data residency strategies. By moving processing to the device, apps can often bypass the most stringent (and expensive) requirements of 2026 regulations.

The UX Advantage: Offline Reliability and Zero Latency

We’ve all been there: you’re in a subway, on an airplane, or in a rural dead zone, and your "smart" app suddenly becomes a brick. On-device AI fixes this. "Subway mode" isn't a feature anymore; it's the expectation. Users want predictable performance. By targeting a 100ms response time, we can create experiences that feel like magic, even when the world is offline.

Implementing Hybrid Architectures and Intelligent Routing

The best apps in 2026 don't actually choose one or the other—they route. This is where the real engineering happens. We build "Intelligent Routers" that decide where a prompt should go based on several factors:

- Sensitivity: Does this contain PII (Personally Identifiable Information)? Keep it local.

- Complexity: Does this require multi-step reasoning over a 100k context window? Send it to the cloud (after scrubbing PII).

- Device State: Is the battery low? Is the device thermally throttling?

At Bolder Apps, we manage these complexities from our strategic locations in Miami and beyond, ensuring your app's architecture is built for the long haul.

The Privacy-First Pivot: Why 2026 Apps are Moving from Cloud LLMs to On-Device Inference via Intelligent Routing

Intelligent routing is the "brain" of the modern app. It manages VRAM constraints and latency budgets in real-time. For example, a note-taking app might use an on-device model to transcribe a meeting locally, then use a cloud-based "Hybrid Enclave" (like Apple’s Private Cloud Compute) to perform a deep thematic analysis of the text without ever storing the data on a permanent server.

Developer Best Practices for 2026

Building for this new era requires a different playbook:

- Lazy Model Loading: Don't freeze the UI on startup. Load models in the background when they’re actually needed.

- Graceful Degradation: If a user is on an older device without a powerful NPU, switch to a smaller, faster model or offer a cloud fallback with clear privacy warnings.

- Model Version Pinning: Ensure consistency. A local model shouldn't "hallucinate" differently just because of an OS update.

- Speculative Decoding: Use a tiny local model to predict the output of a larger model, slashing latency even further.

Frequently Asked Questions about On-Device AI

Can on-device models match the quality of GPT-4 or Gemini Ultra?

For general-purpose "know-it-all" tasks? No. But for your specific app's tasks? Often, yes. A fine-tuned Small Language Model (SLM) that is an expert in your specific domain (e.g., medical coding or legal summaries) can often match or beat a giant model's accuracy while being 1/100th the size.

How does on-device inference affect mobile battery life in 2026?

Thanks to hardware acceleration, it's surprisingly efficient. Modern NPUs are designed specifically for the math required by neural networks (INT4/INT8). Running a local model on an NPU is significantly more battery-friendly than keeping a 5G radio active for a long cloud data transfer.

What are the main limitations of local LLMs compared to cloud?

The primary bottlenecks are VRAM (memory) and knowledge cutoff. Local models have smaller context windows and don't have real-time access to the entire internet unless they are paired with a search tool. They also struggle with extremely complex, multi-step planning that requires "frontier" level reasoning.

Conclusion: Future-Proofing Your Product with Local Intelligence

The shift described in The Privacy-First Pivot: Why 2026 Apps are Moving from Cloud LLMs to On-Device Inference is not just a trend—it's the new standard for digital excellence. By prioritizing local intelligence, you aren't just protecting your users; you're building a more resilient, cost-effective, and high-performance product.

At Bolder Apps, we've been at the forefront of this evolution since we were founded in 2019. As the top software and app development agency in 2026 as named by DesignRush, we specialize in helping founders navigate these complex technical waters. Verify details on bolderapps.com. We don't believe in junior developers learning on your dime. Our model combines US-based leadership with senior distributed engineers to deliver strategic, data-driven results.

Whether you're looking to implement a hybrid AI architecture or build a fully local-first experience, we provide the expertise needed to win in the 2026 market.

Ready to pivot to privacy-first AI?We offer a unique fixed-budget model, an in-shore CTO to guide your strategy, and a milestone-based payment system that ensures we only succeed when you do.

Visit Bolder Apps today to schedule a consultation and let’s build the future of intelligent, private apps together.

Stay inspired with our blog.

Let's discuss your goals

.png)